Talks ∋ Some rough fibrous material

Some rough fibrous material

The key word in my title is “rough”; Iʼm coming to the material with little or no practical knowledge. Iʼm not using 1.9 in my day job, and although I probably would use it for any spare-time hacking, itʼs very rare that I get down to any as I am, basically, lazy.

So apologies to anyone that knows this stuff already; I might get things wrong, or not cover everything in enough detail. Iʼm sorry.

What are fibers?

Fibers are an implementation of 2 important ideas:

- The first idea is “co-routines” (and this should sound familiar, as you’ll have heard of sub-routines which are related)

- The second idea is “co-operative multitasking” (and again, you should recognise this as similar sounding to “pre-emptive mutlitasking”).

Weʼll take a quick detour to cover these in turn and then weʼll come back to Ruby.

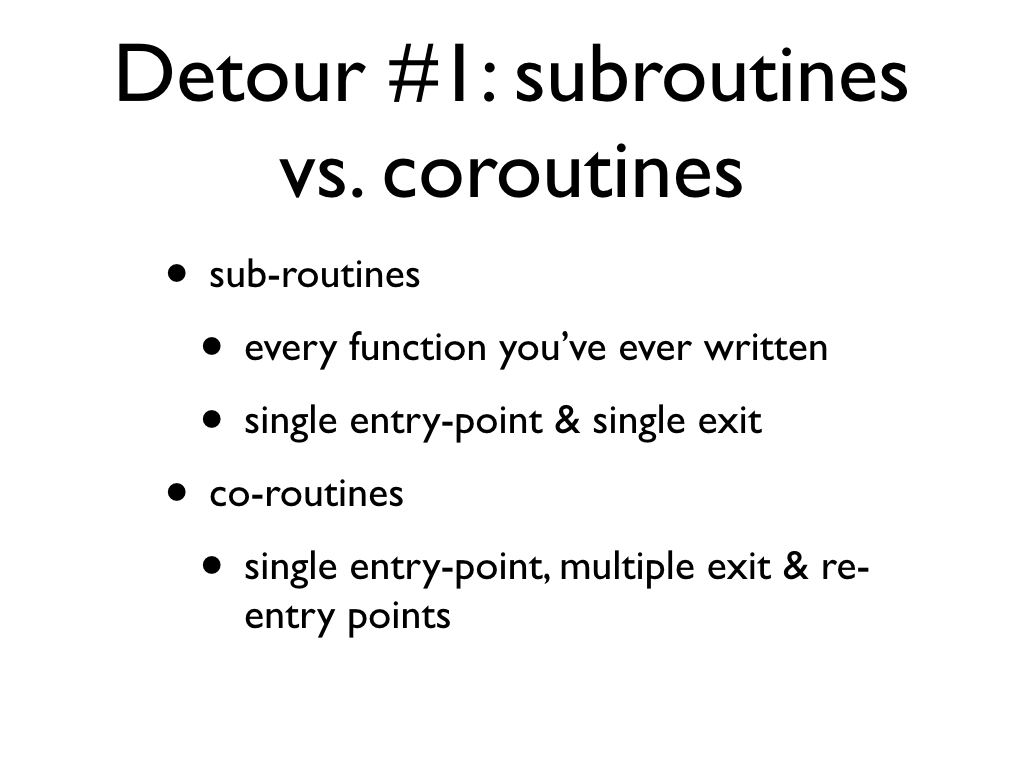

Detour #1: subroutines vs. coroutines

Pretty much every method or function you’ve ever written is a sub-routine. When you invoke them you start at the first line and run through them till they terminate and give you their result.

A co-routine is a little bit different. When you invoke them they also start on the first line of code but they can halt execution and exit before they terminate. Later you can then re-enter and resume execution from where you left off.

It’s also unlikely you’ll have written one, yet, as despite being around for a while not many languages provide them as a feature.

Detour #1.a: subroutines

Here’s a simple subroutine example.

When you call a method the flow of control enters the function, and is trapped until the method terminates.

Once the method terminates, here with an explicit return, but it could be an exception, or simply stopping after the last executable statement of the code path, the flow of control is finally released to the caller.

Detour #1.b: subroutines

Once you exit a sub-routine, the door is closed; you can’t return to it the way you came out.

To re-use the sub-routine, your only option is to re-invoke it and go back to the first line of code. This creates a new copy of the entire stack, so there’s nothing shared between this invocation and the previous ones, or any future ones. Depending on your code, this could be expensive.

Detour #1.c: coroutines

And here’s a similar example for a co-routine.

It starts pretty much the same way. The flow of control enters the method and is trapped until it provides a result, this time with a yield. However, unlike before, we can resume the method and send the flow of control back in to continue working, picking up where we were when we left off.

Detour #1.d: coroutines

What makes co-routines even more interesting is that we can yield and resume as many times as we want, until, of course, the co-routine comes to a natural termination point.

We can also have as many yield’s as we want, we don’t always have to yield from the same place. Although having yielded at a given point, we resume at that point, we can’t choose some other yield point to re-enter at.

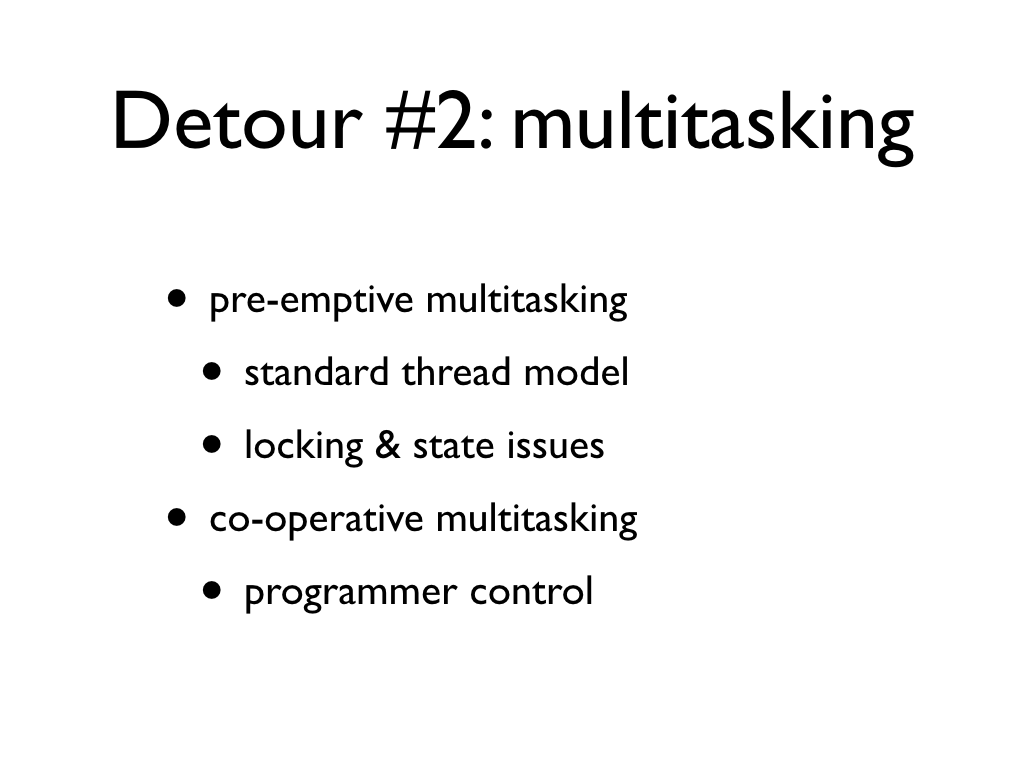

Detour #2: multitasking

You should be familiar with pre-emptive multitasking as it’s the standard model of concurrency used by most Thread implementations.

You have several tasks running at the same time, scheduled by the OS or language runtime. The gotcha is access to shared objects.

Fibers however use the co-operative model. With this no tasks run at the exact same time and it’s up to the programmer to decide when each task will give up control and who to pass control onto.

Detour #2.a: pre-emptive

The main problem with pre-emptive multitasking is that (on a single core machine) these two threads are given CPU time arbitrarily by some scheduler. They don’t know when in their life-cycle this’ll happen, so when thread alpha wants to access the shared data, it has to lock it. Unfortunately this means the shared data could remain locked while thread beta has the CPU time, so thread beta can’t do anything.

Detour #2.b: co-operative

On the other hand, in co-operative multitasking, the fiber itself has explicit control of when the CPU will transfer away. This means it doesn’t need to lock anything because it’s safe in the knowledge that no other fiber will be running unless it says it’s done.

When the fiber is done (or happy that it’s done enough for now), it stops accessing the shared data and simply transfers control away to some other fiber.

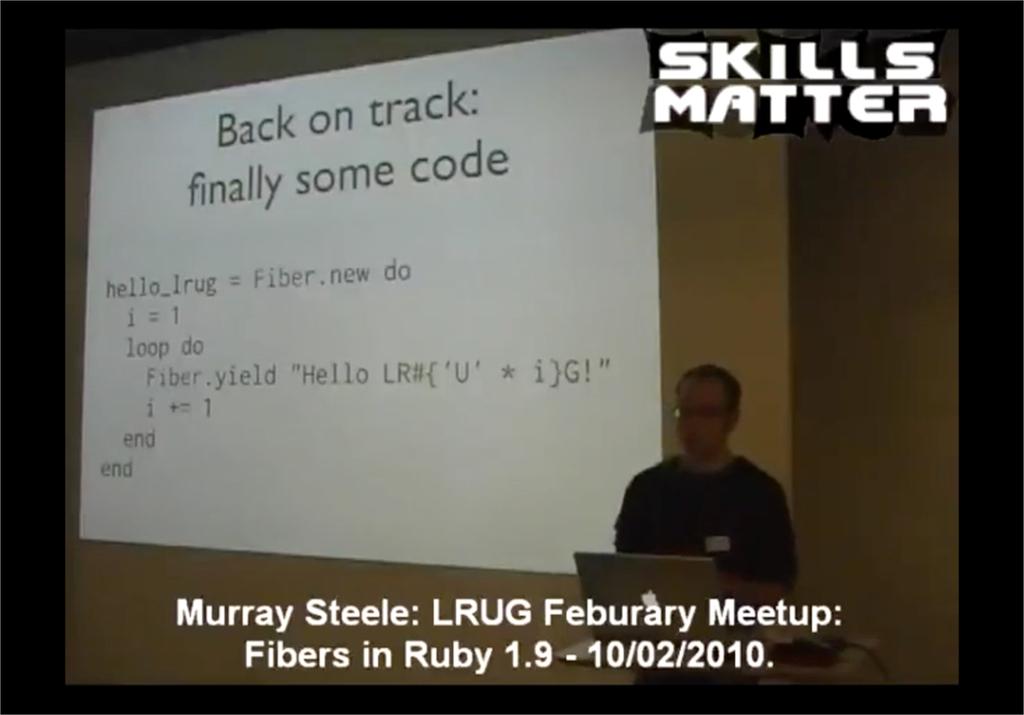

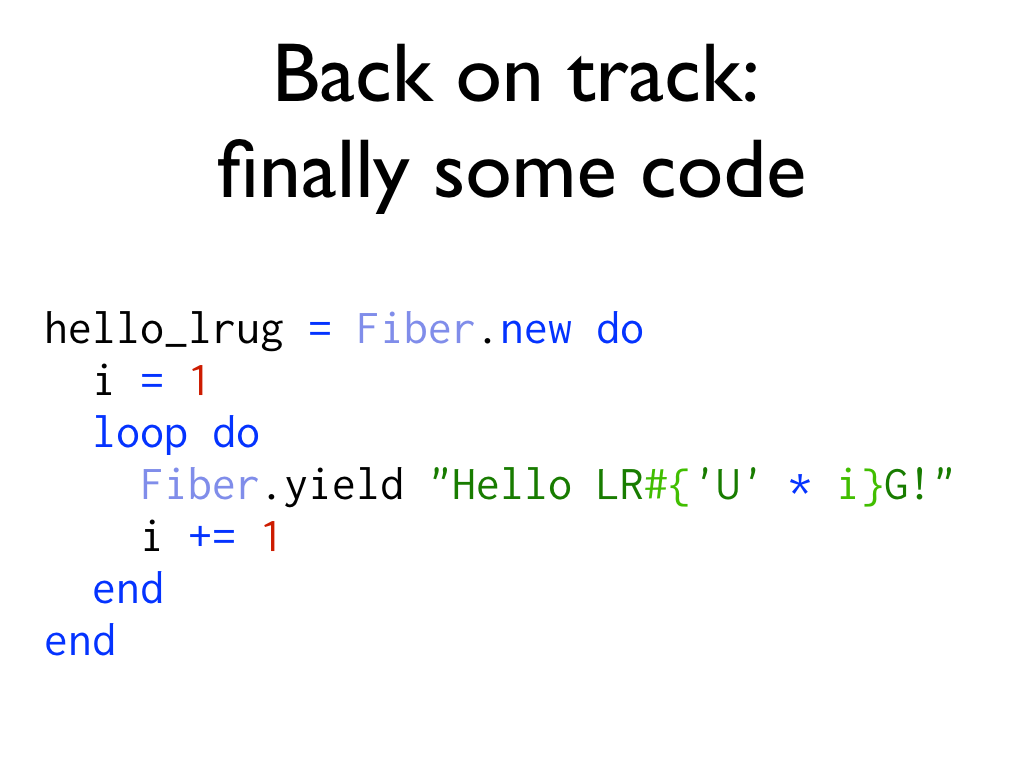

Back on track: finally some code

I’ve bored you with the science part, how about looking at some code?

If you’ve used threads in ruby this should be familiar. You create a Fiber by passing a block to a constructor. The block is the “work load” for that fiber. In this case an infinite loop to generate increasingly excited hellos to the LRUG crowd. Don’t worry about that pesky “infinite” though…

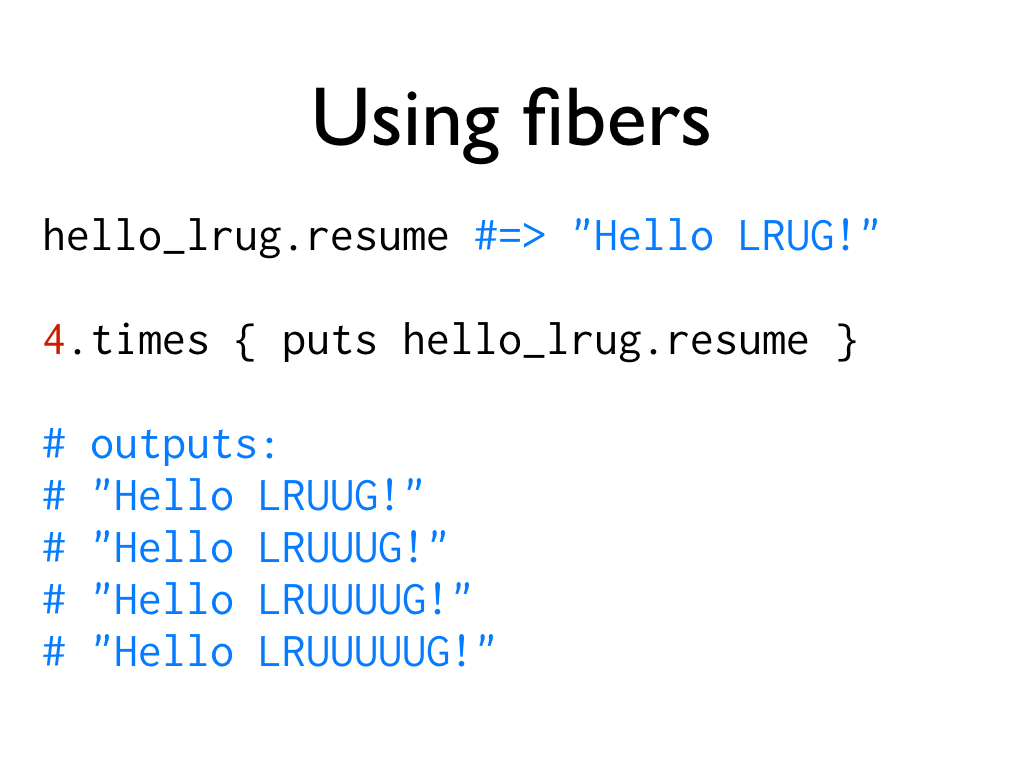

Using fibers

When you create a fiber, again just like a thread, it won’t do anything until you ask it to. To start it you call the somewhat chicken-before-the-egg resume method. This causes hello_lrug to run until it hits that Fiber.yield. This pauses execution of the fiber and returns the value passed to it. You also use resume to re-enter the fiber to do some more work.

Using fibers means never having to say you’re finished

So although we gave hello_lrug a workload that will never end, it’s not a problem because we use the yield and resume methods to explicitly schedule when hello_lrug runs. If we only want to run it 5 times and never come back to it, that’s ok, it won’t eat up CPU time. This gives us an interesting new way to think about writing functions; if they don’t have to end lazy evaluation becomes super easy.

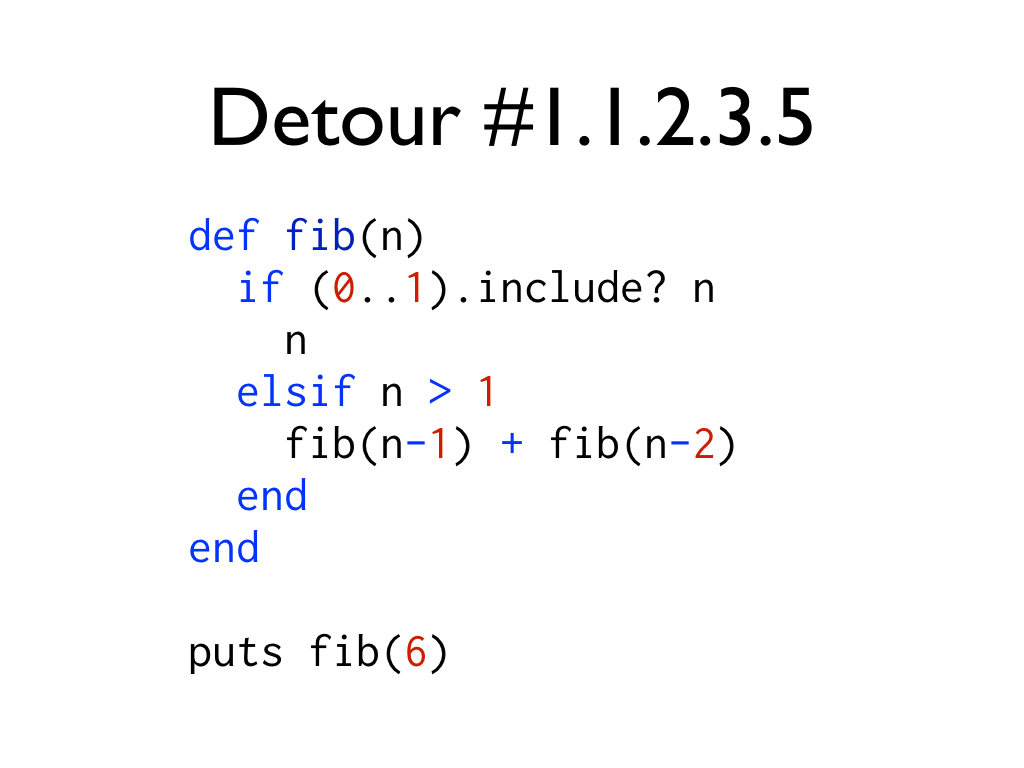

Detour #1.1.2.3.5

Hey, so what’s a talk without Fibonacci?

Here’s the standard implementation for generating a number in the fibonacci sequence using ruby. It uses recursion, which is something you have to get your head around before you see how it works, and that can be hard sometimes, and you have to take care to have correct guard clauses to make sure you terminate the recursion.

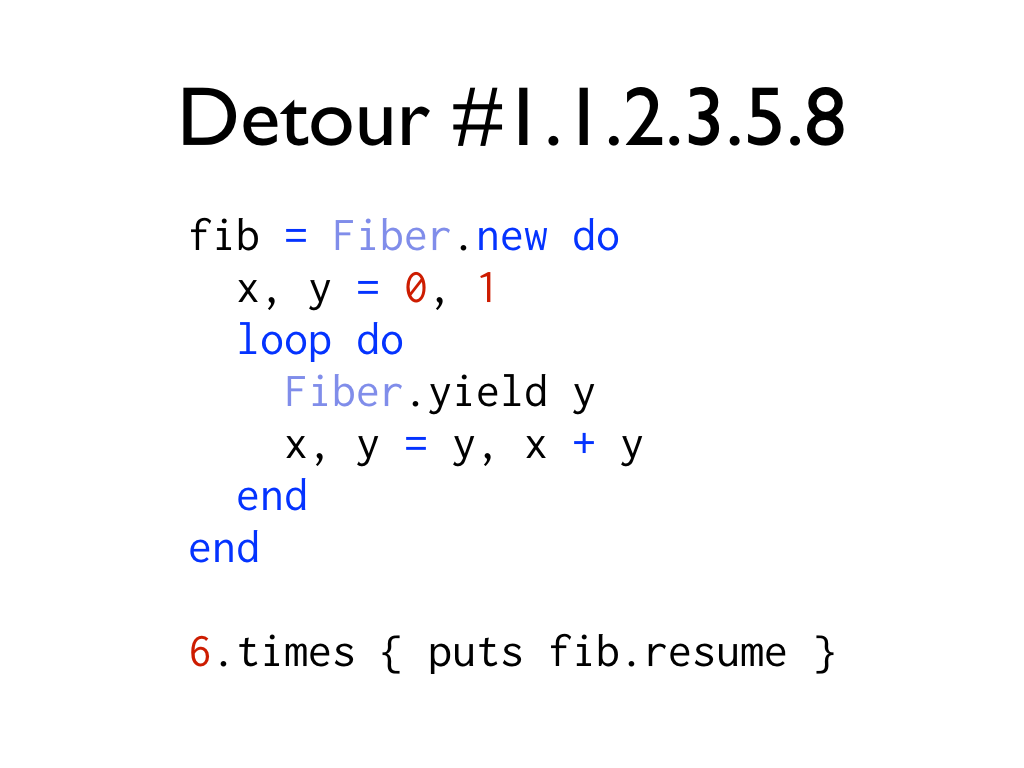

Detour #1.1.2.3.5.8

Here’s the fibrous way of doing it. Again, there is a fundamental concept you need to understand first (co-routines), but I do think this is a slightly more natural way of defining the sequence.

The difference is that to get the 6th number, we have to call resume on the fiber 6 times. With the side-effect of being provided with all the preceding 5 numbers in the sequence.

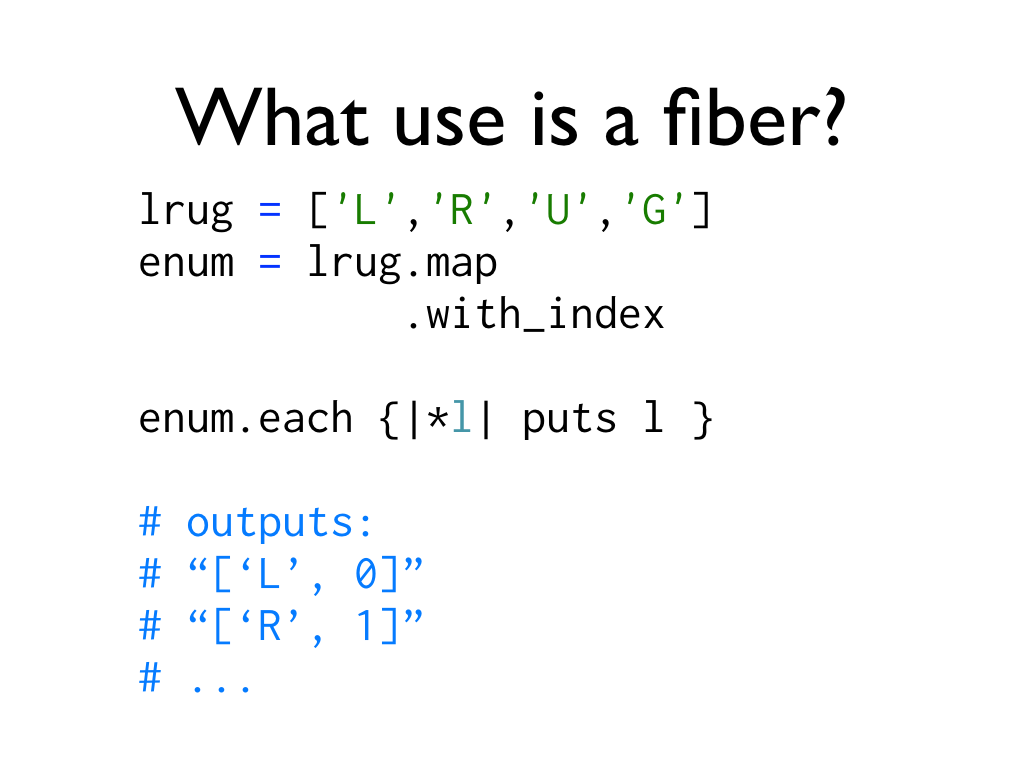

What use is a fiber?

This sort of lazy evalutation is where Fibers shine, and probably where they’ll see the most use.

And, in fact, it’s exactly this sort of thing that Fibers are being used for in the ruby 1.9 stdlib. Things like .each and .map have been reworked so that without a block they now return enumerators that you can chain together. And under the hood these enumerators are implemented using fibers.

What practical use is a fiber?

That’s all a bit theoretical. What real use are fibers?

Well, I don’t know, so I did a quick search on github, and to my surprise there were actually plenty of results.

But… on closer inspection, the first few pages are entirely forks and copies of the Ruby specs for fibers. Which, by the way, I totally recommend reading if you want to get an idea how something in ruby actually works.

The first result that wasn’t a ruby spec requires another detour first.

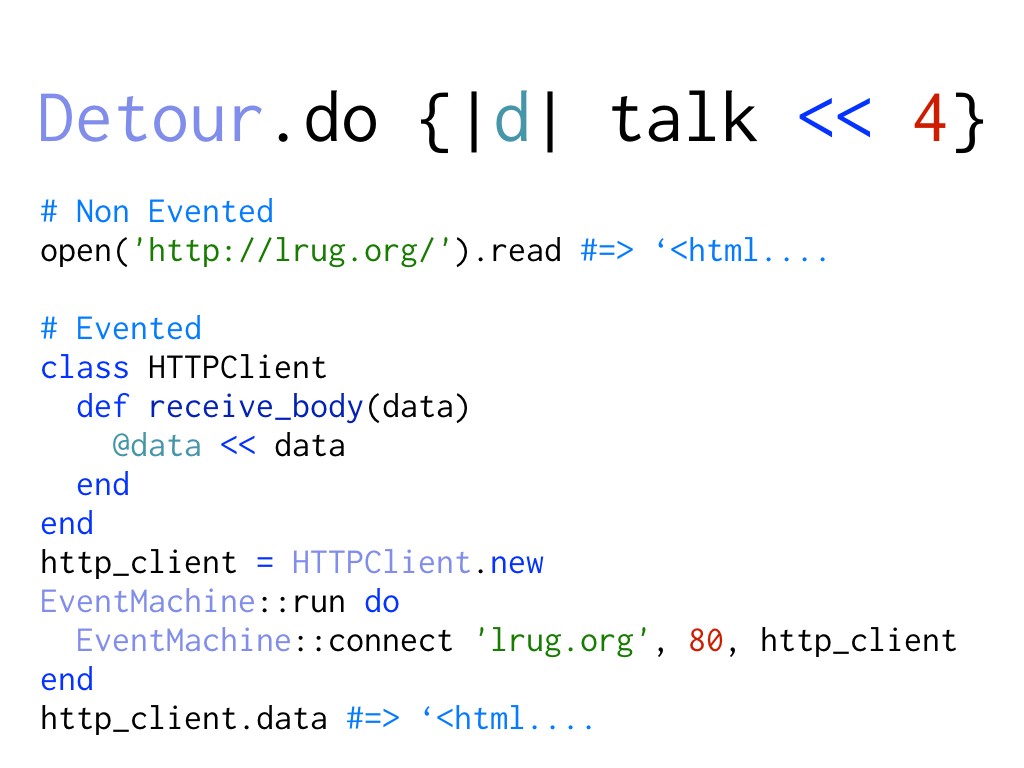

Detour.do {|d| talk << 4}

Well… another quick detour. If you’ve ever done any evented programming you’ll know that the code is very different looking to normal code.

Here’s a simplified example of how to read a webpage. For the normal case it’s really simple, you just call a couple of methods.

The evented case, not so much. You have to rely on callback methods and keep some object around to hold the result of those callbacks. What you lose in a simplified API you gain in performance and flexibility, but it’s hard to get your head around1.

So…what is a practical use for a fiber?

The first non-ruby spec result on github that uses fibers was: Neverblock2.

This library uses Fibers, Event Machine and other non-blocking APIs to present you with an API for doing asynchronous programming that looks remarkably synchronous. So you don’t have to change your code to get the benefit of asynchronous performance.

I won’t go into details (I only have 1 more slide!), but you should check it out if you’re interested.

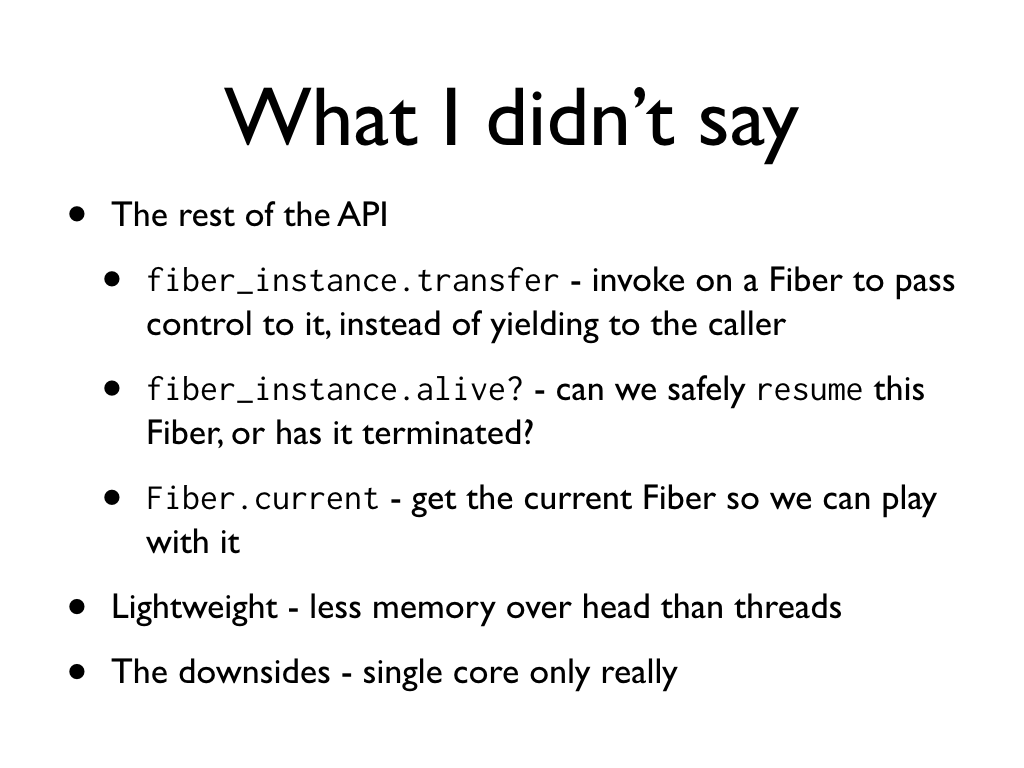

What I didn’t say

Last slide. There’s loads I didn’t cover, but I think I got the basics.

There are 3 remaining API methods (apart from resume and yield) which I already covered. Fiber#transfer is like yield, but instead of giving CPU back to the caller, you give it to the fiber you called transfer on. The other two are simple enough.

Fibers are supremely lightweight: spinning up fibers takes much less memory than spinning up a thread. There’s a good comparison from the author of the Neverblock gem.

The downside is that they are a single-core solution really, and weʼre increasingly heading towards a multi-core world.

It’s over

Thanks for listening!

Research links

Bonus list of links to my research:

- https://pinboard.in/u:h-lame/t:fibers (most of the stuff I researched is here)

- http://github.com/oldmoe/neverblock

- http://en.wikipedia.org/wiki/Fiber_(computer_science)

- http://en.wikipedia.org/wiki/Coroutine